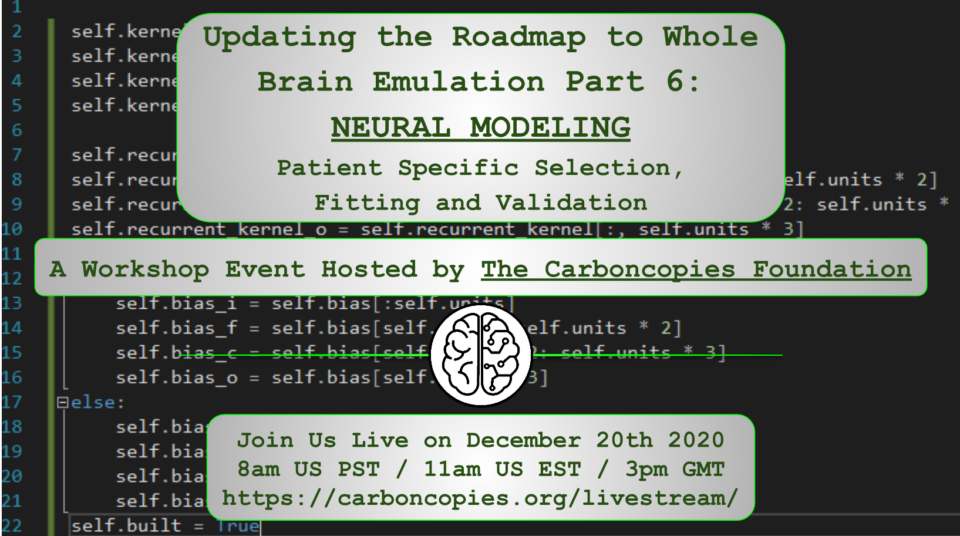

Updating the Roadmap to Whole Brain Emulation Part 6:

NEURAL MODELING

Patient Specific Selection, Fitting and Validation

The Carboncopies Foundation is continuing the workshop series around updating the Roadmap to Whole Brain Emulation, the first version of which was published in 2008 as the Oxford University manuscript, "Whole Brain Emulation: A Roadmap."

This upcoming workshop will focus on the practical problems of model selection and model fitting. Modeling brain function is currently done using software simulations that include representations of the dynamic processes in neurons and synapses that transform signal data obtained from experimental recordings or generated by software. The ultimate goal for Whole Brain Emulation (WBE) continues to be to achieve animal-specific or patient-specific emulation of brain activity that is needed for cognitive function and subjective cognitive experience. In other words, we can assume that a successful model will operate on spatio-temporal patterns of neural activity, and will include the ability to adapt and learn that is generally referred to as brain plasticity.

We will discuss scenarios such as the following:

#1 All the Data: We set aside the problem of data acquisition and assume that we can map the connections of every neuron, and can record the activity of each neuron for as long as we wish in any setting. While this is currently only possible with preparations in extremely constrained laboratory settings, it is an interesting idealized baseline. With all the data we could possibly want, what is the process to build a satisfactory brain emulation? What hurdles remain? How might we best choose the right model and model fitting process?

#2 Ephys Data: For the brain region (or the whole brain) we want to emulate, we know the basic structure for those neural circuits, but not for any specific brain. What we do have are extensive recorded samples of the activity of neurons, obtained by an appropriate method, such as two-photon imaging or electrophysiology. What might we hope to achieve in this case?

#3 Ultrastructure: Considering the same brain region (or the whole brain), this time we have a good generic understanding of system and circuit theory, of dynamic modes, and of typical response profiles associated with the components involved. We do not have large-scale high resolution electrode recordings for the specific sample, but we do have its complete 3D reconstructed ultrastructure, as obtained by electron microscopy. How does this become a sample-specific functional model? What might we achieve now, and what presently stands in the way?

For an emulation to work there needs to be an effective procedure for modeling that which you want emulated. This means modeling neuronal population and circuit function to a point where the resulting operations on signals meet practical success criteria for brain emulation. Not only must the model effectively achieve the desired signal processing within a satisfactory envelope of neurobiologically plausible outcomes, but the model building process must be realistically feasible in time and resource consumption. The time taken, and the amount of data needed, to estimate model parameters (i.e. fitting / training) tends to increase exponentially with the number of parameters (the curse of dimensionality). Any model of a whole brain will have an extraordinarily large number of parameters. What is the best way to break down the modeling process so that the process can be completed for each part in reasonable time?

In this workshop we will discuss these challenges, the current advancements being made, effective constraint setting, and we will attempt to outline the next crucial steps toward computational modeling in the pursuit of brain emulation.

This live workshop event will be held on Sunday, December 20, 2020 8AM PST / 11AM EST / 3PM GMT, presented globally through the Carboncopies Foundation YouTube Channel https://carboncopies.org/livestream. We hope to see you there!

Please RSVP!